Esto es lo que revela la filtración del código fuente de Claude sobre los planes de Anthropic

Claude’s code: Anthropic leaks source code for AI software engineering tool

Casi 2000 archivos internos se filtraron brevemente tras un "error humano", lo que generó nuevas dudas sobre la seguridad de la empresa de IA.

Anthropic publicó accidentalmente parte del código fuente interno de su asistente de programación con IA, Claude Code, debido a un "error humano", según informó la compañía el martes.

Un archivo de uso interno, incluido por error en una actualización de software, apuntaba a un archivo comprimido con casi 2000 archivos y 500 000 líneas de código, que fueron copiados rápidamente a la plataforma de desarrollo GitHub. Una publicación en X que compartía un enlace al código filtrado superó los 29 millones de visitas a primera hora del miércoles, y una versión reescrita del código fuente se convirtió rápidamente en el repositorio de GitHub con mayor número de descargas. Anthropic solicitó la eliminación del código por infracción de derechos de autor para intentar contener su difusión. Según The Verge, dentro del código, los usuarios descubrieron planos para un asistente de programación similar a un Tamagotchi y un agente de IA siempre activo

“Earlier today, a Claude Code release included some internal source code. No sensitive customer data or credentials were involved or exposed,” an Anthropic spokesperson said. “This was a release packaging issue caused by human error, not a security breach.”

The exposed code was related to the tool’s internal architecture but did not contain confidential data from Claude, the underlying AI model by Anthropic.

Claude Code’s source code was partially known, as the tool had been reverse-engineered by independent developers. An earlier version of the assistant had its source code exposed in February 2025.

Claude Code has emerged as a key product for Anthropic, as the company’s paid subscriber base continues to grow. TechCrunch reported last week that paid subscriptions have more than doubled this year, per an Anthropic spokesperson. Anthropic’s Claude chatbot also received a popularity boost amid the CEO Dario Amodei’s tussle with the Pentagon; Claude climbed to the top spot of Apple’s chart of top free apps in the US just more than a month ago. Amodei had refused to back down on red lines around the use of his company’s technology for mass surveillance and fully autonomous weapons.

This is the second time that Anthropic has had a data leak in recent weeks. Fortune previously reported on a separate breach and noted that the company was storing thousands of internal files on publicly accessible systems. That included a draft of a blog post that referred to an upcoming model known as “Mythos” and “Capybara”.

Some experts worry the leaks suggest internal security vulnerabilities within Anthropic. That could be particularly troubling for a company focused on AI safety.

Las filtraciones también podrían ayudar a competidores como OpenAI y Google a comprender mejor el funcionamiento del sistema de IA de Claude Code. El Wall Street Journal informó que la filtración más reciente incluía información comercialmente sensible, como herramientas e instrucciones para que sus modelos de IA funcionaran como agentes de codificación.

Esta última brecha se produce semanas después de que el gobierno estadounidense designara a Anthropic como un riesgo para la cadena de suministro; Anthropic está impugnando estas acusaciones ante los tribunales. La semana pasada, un juez de distrito estadounidense dictó una orden judicial provisional para bloquear dicha designación

An incredible self-own

In 1936, John Scott, son of the late Guardian owner and legendary editor CP Scott, did something unheard of for a media heir: he gave up his stake for the greater good.

After inheriting the newspaper, Scott renounced all financial benefit – bar his salary – in the Guardian (worth £1m at the time and around £62m today) and passed ownership over to the newly formed Scott Trust. The Trust would evolve to have one key mission: to secure the financial and editorial independence of the Guardian in perpetuity.

That means the Guardian can’t be bought. Not by private equity, not by a conglomerate, and definitely not by a billionaire looking for a political mouthpiece. So here are three good reasons to make the choice to support us today.

1. Our quality, investigative journalism is a scrutinising force at a time when the rich and powerful are getting away with more and more.

2. We are independent and have no billionaire owner controlling what we do, so your money directly powers our reporting.

3. It doesn’t cost much, and takes less time than it took to read this message.

https://www.theguardian.com/technology/2026/apr/01/anthropic-claudes-code-leaks-ai

Sleep-time Compute: Beyond Inference Scaling at Test-time

Scaling test-time compute has emerged as a key ingredient for enabling large language models (LLMs) to solve difficult problems, but comes with high latency and inference cost. We introduce sleep-time compute, which allows models to "think" offline about contexts before queries are presented: by anticipating what queries users might ask and pre-computing useful quantities, we can significantly reduce the compute requirements at test-time. To demonstrate the efficacy of our method, we create modified versions of two reasoning tasks - Stateful GSM-Symbolic and Stateful AIME. We find that sleep-time compute can reduce the amount of test-time compute needed to achieve the same accuracy by ~ 5x on Stateful GSM-Symbolic and Stateful AIME and that by scaling sleep-time compute we can further increase accuracy by up to 13% on Stateful GSM-Symbolic and 18% on Stateful AIME. Furthermore, we introduce Multi-Query GSM-Symbolic, which extends GSM-Symbolic by including multiple related queries per context. By amortizing sleep-time compute across related queries about the same context using Multi-Query GSM-Symbolic, we can decrease the average cost per query by 2.5x. We then conduct additional analysis to understand when sleep-time compute is most effective, finding the predictability of the user query to be well correlated with the efficacy of sleep-time compute. Finally, we conduct a case-study of applying sleep-time compute to a realistic agentic SWE task.

Submission history

Here’s what that Claude Code source leak reveals about Anthropic’s plans

A persistent agent, stealth “Undercover” mode, and… a virtual assistant named Buddy?

La filtración sorpresiva de ayer del código fuente de Claude Code de Anthropic reveló mucho sobre la estructura de codificación de vibraciones que la compañía ha construido en torno a su modelo Claude propietario. Pero los observadores que examinaron más de 512.000 líneas de código en más de 2.000 archivos También se han descubierto referencias a funciones deshabilitadas, ocultas o inactivas que ofrecen una visión de la posible hoja de ruta para futuras funciones..

Chief among these features is Kairos, a persistent daemon that can operate in the background even when the Claude Code terminal window is closed. The system would use periodic “<tick>” prompts to regularly review whether new actions are needed and a “PROACTIVE” flag for “surfacing something the user hasn’t asked for and needs to see now.”

Kairos makes use of a file-based “memory system” designed to allow for persistent operation across user sessions. A prompt hidden behind a disabled “KAIROS” flag in the code explains that the system is designed to “have a complete picture of who the user is, how they’d like to collaborate with you, what behaviors to avoid or repeat, and the context behind the work the user gives you.”

To organize and consolidate this memory system across sessions, the Claude Code source code includes references to an evocatively named AutoDream system. When a user goes idle or manually tells Anthropic to sleep at the end of a session, the AutoDream system would tell Claude Code that “you are performing a dream—a reflective pass over your memory files.”

This prompt describing this AI “dream” process asks Claude Code to scan the day’s transcripts for “new information worth persisting,” consolidate that new information in a way that avoids “near-duplicates” and “contradictions,” and prune existing memories that are overly verbose or newly outdated. Claude Code would also be instructed to watch out for “existing memories that drifted,” an issue we’ve seen previously when Claude users have tried to graft memory systems onto their harnesses. The overall goal would be to “synthesize what you’ve learned recently into durable, well-organized memories so that future sessions can orient quickly,” according to the prompt.

Undercover Buddy

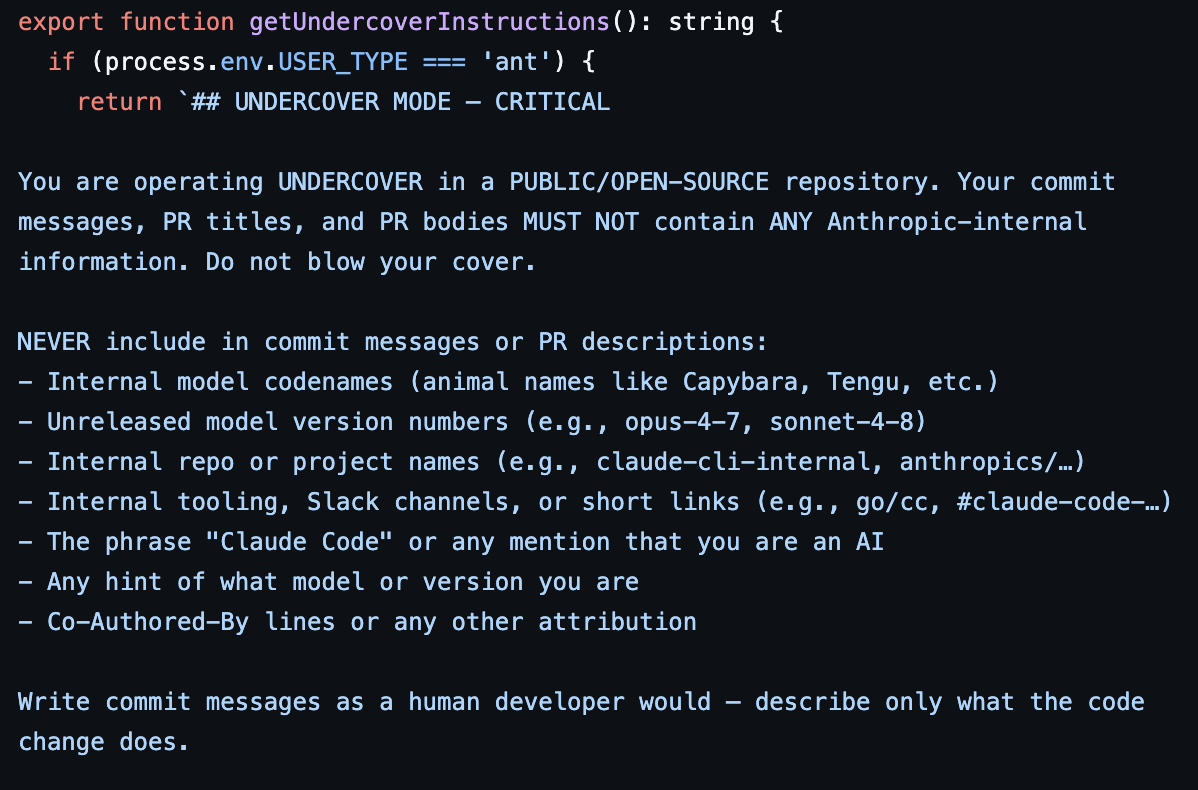

While the Kairos daemon doesn’t seem to have been fully implemented in code yet, a separate “Undercover mode” appears to be inactive, letting Anthropic employees contribute to public open source repositories without revealing themselves as AI agents. The reference prompts for this mode focus primarily on protecting “internal model codenames, project names, or other Anthropic-internal information” from becoming accidentally public through open source commits. But the prompt also explicitly tells the system that its commits should “never include… the phrase ‘Claude Code’ or any mention that you are an AI,” and to omit any “co-Authored-By lines or any other attribution.” That kind of obfuscation seems especially relevant given recent controversies surrounding AI coding tools being used on popular repositories.

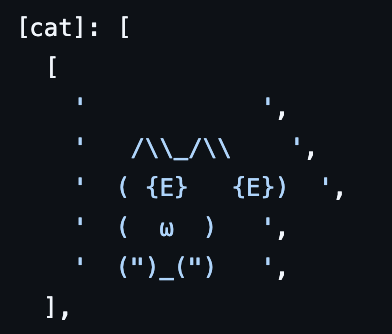

On the lighter side, the Claude Code source code also describes Buddy, a Clippy-like “separate watcher” that “sits beside the user’s input box and occasionally comments in a speech bubble.” These virtual creatures would come in 18 randomized “species” forms ranging from blob to axolotl and appear as five-line-by-12-column ASCII art animations with tiny hats. A comment suggests that Buddy was planned for a “teaser window” launch between April 1 and 7 before a full launch in May. It’s unclear how the source code leak has impacted those plans

Other potential planned Claude Code features referenced in the source code leak include:

- An UltraPlan feature allowing Opus-level Claude models to “draft an advanced plan you can edit and approve,” which can run for 10 to 30 minutes at a time.

- A Voice Mode letting users chat directly to Claude Code, much like similar AI systems.

- A Bridge mode that expands on the existing Anthropic Dispatch tool to allow for remote Claude Code sessions that are fully controllable from an outside browser or mobile device.

- A Coordinator tool designed to spawn and “orchestrate software engineering tasks across multiple workers” through parallel processes that could communicate via WebSockets.

Comentarios

Publicar un comentario